I’ve added in some really exciting new features to my TrackPerformer project, as well as three new performances: We Three Kings, Carol of the Bells, and Joy to the World.

Filters

It’s now possible to add filters, or effects, into the processing chain. These filters can be applied before any performers (pre-filters) or after all performers (post-filters). At the moment, the included filters are:

- FPS: Calculates the average framerate across the performance, optionally displaying it in a DOM element.

- Grid: Draws a grid to the canvas. The grid may be a simple intersection grid (points), or lines. Both the X and Y axes may be independently configured.

- Pick: Probably the most interesting (and processor-intensive) part of TrackPerformer. The Pick filter will randomly swap a pixel with one of its neighbours. It’s used at full intensity on both We three Kings and Joy to the World, and, when toned down a little for Carol of the Bells, provides a softening, organic effect.

I’ve got some ideas for more filters down the track… The only difficulty is keeping performance acceptable: manipulation of the canvas pixel by pixel is quite slow in current browsers.

Performers

There are a couple of new performers, and some minor updates to some of the existing ones. The Oscillator performer, in particular, is rapidly becoming the most flexible and useful of the performers.

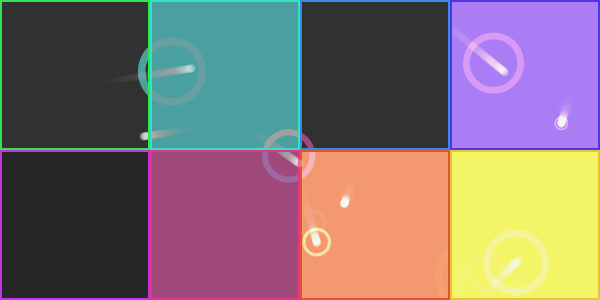

- There’s a new ShimmerGrid performer, which is great for adding texture and movement to the entire canvas. You can see it in action particularly well on Joy to the World.

- The Swarm performer can now draw its particles as knots (like the SignalTracker), as well as as dots.

- The Oscillator now has the ability to draw sustained notes, and to increase the longevity of notes. Take a look at Carol of the Bells to see these new options in use.

- Notes can now be filtered based not only on their pitch, but also their velocity (volume).

There are a couple of other changes here and there, but these are the main ones.

We Three Kings

The three new example tracks are all taken from We Three Kings, my new Christmas remix album. Why not go and have a listen?